Cloud-to-Cloud Migration Tools

Tools and Approaches for Data Migration to Wasabi

Are you migrating data (decommissioning the old storage capability) or duplicating data (for disaster recovery, second/third copy backup needs or as a replacement for primary data storage) from one cloud provider to Wasabi?

Welcome to Wasabi – this is a well worn path, with many tools and approaches that makes this process simple.

You have more options available than you realize, regardless of the size of your organization or your budget, and the choice that is best for your situation is a combination business and technology decision. There is no single best approach that applies universally.

When migrating or duplicating the data you currently have in another public cloud storage provider, you will want to consider whether the best choice involves:

- Moving data directly from your existing cloud storage provider to Wasabi, using:

- Option 1 – The All-Cloud Migration – A migration tool that is itself in the cloud or;

- Option 2 – Self-hosted Cloud Migration – A migration tool that is self-hosted by your organization, but otherwise connecting your current public cloud provider to Wasabi;

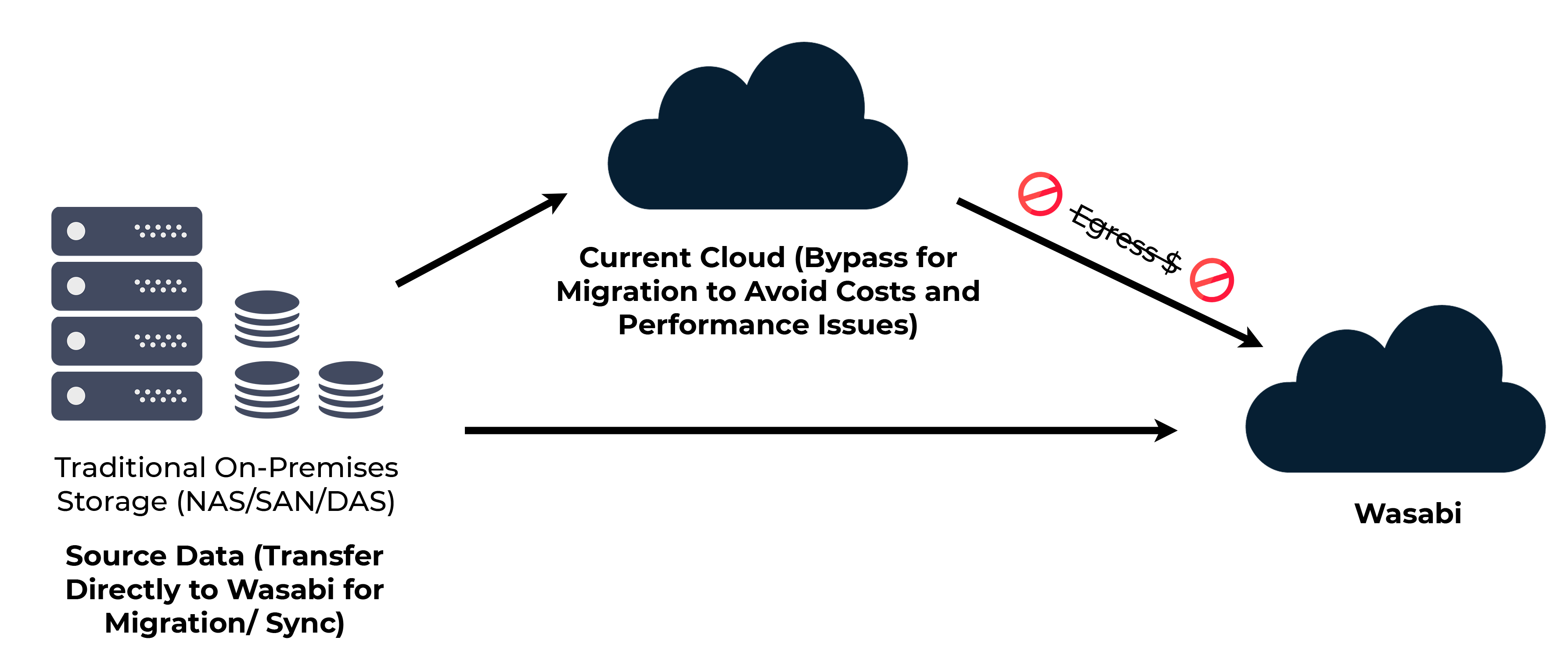

- Option 3 – Source Data Migration – Or you may want to bypass your existing cloud storage provider, and move data directly from the source of the data that is being stored in your existing cloud storage provider.

Wasabi is S3-compatible, which makes re-using any existing tools or techniques that you have already used in an AWS environment easy to implement.

Don’t worry, there are many proven options for any of these scenarios. The most appropriate choice depends on several factors around one-time costs, ongoing costs, performance, and your overall technical and business goals.

From a cost perspective, there are two primary costs to consider:

- The cost of egress fees (otherwise known as transfer fees), which vary depending on the public cloud vendor you are using currently;

- The cost of the migration tool itself (whether a one-time license fee, a subscription-based fee, or a “consumption-based” fee for the amount of data flowing through the tool on top of any data transfer/egress fees charged but your original cloud provider)

Planning Your Costs — Know Your Fees

First-generation cloud providers charge not just for the amount of data you’re storing, but also for downloading, transferring or “egressing” your data outside of their cloud. That’s often the primary reason that our customers are choosing Wasabi, in order to break free of the hidden costs of the first-generation cloud business model.

As you move your data from your existing cloud storage provider, you’ll want to estimate what your costs will be from that provider if you choose to transfer from that provider directly to Wasabi.

Considerations

1. Before deciding on which tool is best for you, what will it cost to extract your data from your current cloud implementation? Most public cloud object storage providers such as AWS, GCP, and Azure will charge you some amount (typically between $.01 and $.09 per GB) to extract (download or egress) the data from their cloud. Run the numbers to know what your budget will need to be to accommodate this approach.

Sometimes, when the cost of downloading the data from your current cloud provider is too high, you may want to consider re-uploading your source data to Wasabi directly from the original data source (application or system) rather than through your current cloud provider.

From a performance perspective, this has the potential for taking a long time depending on the size of the data set and your network connection to Wasabi, but you would avoid egress fees from your current cloud provider.

Note: If you need improved performance compared to a standard public internet connection to Wasabi, we offer direct connections, file acceleration, and a transfer appliance that can radically improve the speed of moving large datasets to Wasabi.

If you are trying to move data from a cloud storage provider that doesn’t charge an egress fee, you should also make sure that this provider does not have per-day or per-month data egress limit (some do). Generally, keep an eye on the fine print for all outbound traffic from your current cloud provider.

2. Evaluate the cost of using the migration tool itself. Some migration tools operate as cloud-based tools (meaning they are hosted in public cloud compute and you don't need to install the tool on your own hardware). In order for the providers of these tools to cover their compute and bandwidth costs and support their own business, most charge some sort of per-GB or per-TB transfer fees. These fees are in addition to the cost of extracting the data from your current cloud provider.

If the cost of using a cloud-based tool is not compatible with your use case (because of metered transfer fees), you may wish to consider a tool that you can deploy in your own environment (a self-hosted tool). That does not necessarily mean you won't have to pay some sort of license fee for access to the tool, but you can avoid the compute and bandwidth costs that factor into the cloud-based tool fees.

To summarize, the following diagrams illustrate where you will typically have costs associated with migration from one cloud provider, or a source data system to Wasabi. All are perfectly valid ways to move data, the question really comes down to the approach that meets your budget and the amount of time and effort it will take to move the data.

Option 1 — The All Cloud Migration

Option 2 — Self-hosted Cloud Migration

Option 3 — Source Data Migration

If you are looking for recommendations on cloud-to-cloud migration tools, Wasabi provides this list of validated tools.

Contact Sales

What if you could store ALL of your data in the cloud affordably?

NOW YOU CAN. Wasabi is here to guide you through your migration to the enterprise cloud and to work with you to determine which cloud storage strategy is right for your organization.